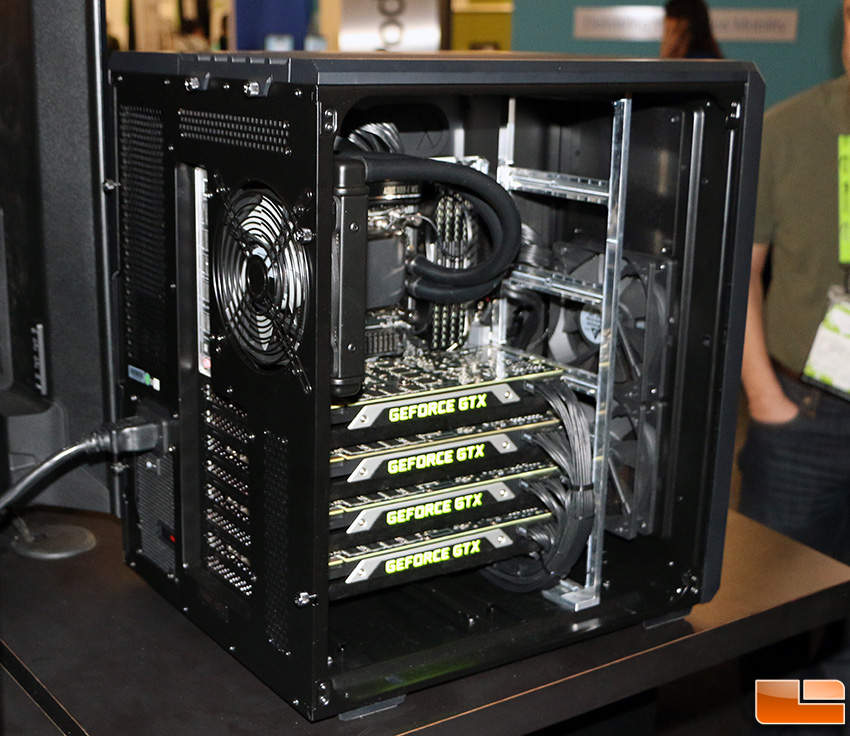

However, most GPUs have a width of two PCIe slots, so if you plan to use multiple GPUs, you will need a motherboard with enough space between PCIe slots to accommodate these GPUs.īy having the optimal amount of GPUs for a deep learning workstation, you can make your entire deep learning model run at peak possible efficiency. Most motherboards will allow up to four GPUs. Your motherboard will serve an essential role in this process because it will have many PCIe ports to support additional GPUs. By multiplying the amount of data processed, these neural networks can learn and begin creating forecasts more quickly and efficiently. However, the more data points are being manipulated and used for input and forecasting, the more difficult it will be to work on all tasks.Īdding a GPU opens an extra channel for the deep learning model to process data quicker and more efficiently. Deep Learning models can be taught more quickly by doing all operations at once with the help of a GPU rather than one after the other. This is why you need GPUs for deep learning. This allows the deep learning model to form predictions and forecasts of what to expect based on data inputs with expected or determined results.

The training phase of a deep learning model is the most resource-intensive task for any neural network.Ī neural network scans data for input during the training phase to compare against standard data. Now, we can discuss the importance of how many GPUs to use for deep learning. In this case, we recommend examining data center GPUs. With all of this in mind, we highly recommend starting with high-quality consumer-grade GPUs unless you know you will be building or upgrading a large-scale deep learning workstation.

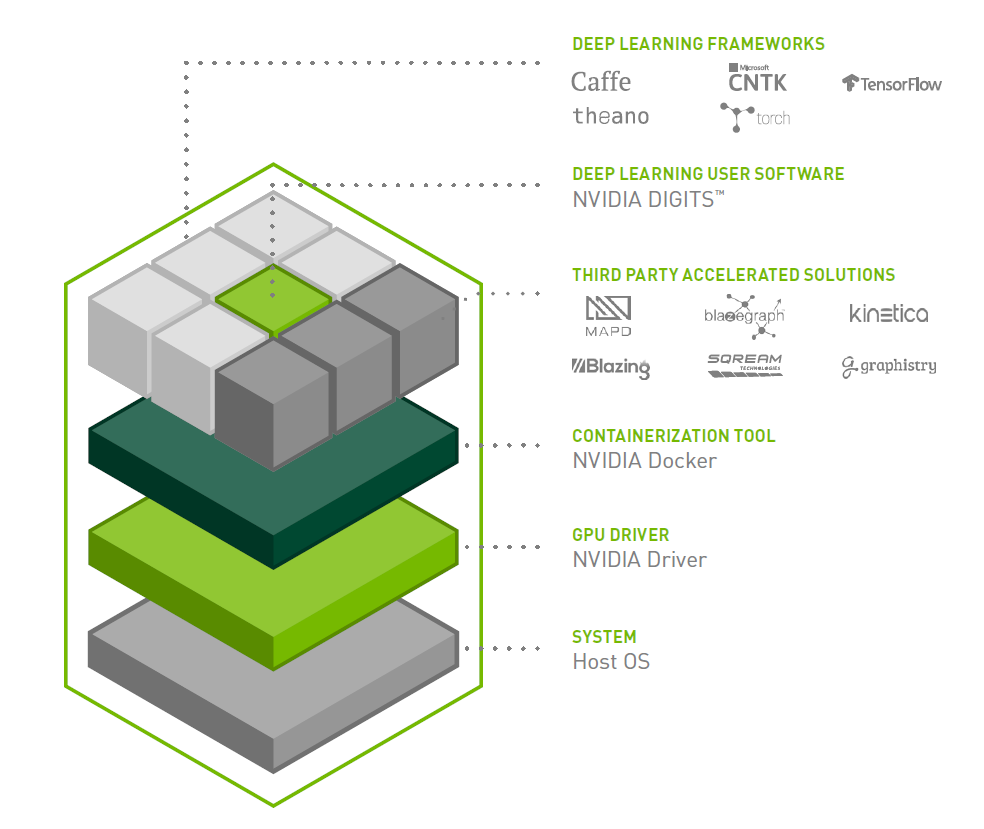

These will be far above anything one might do on their budget or utilize to its full potential on their own. These move beyond simply hobby projects, small business type of projects, and into the realm of corporation-level usage. Systems are plug-and-play, and they may be deployed on bare metal or in containers.

Machine learning and deep learning procedures are the focus of these systems. Managed workstations and servers are full-stack, enterprise-grade systems. These GPUs are built for large-scale projects and deliver enterprise-level performance. Truly, these are the best GPUs for deep learning right now. However, as you begin to get into data points in the billions, these types of GPUs will begin to fall off in efficiency and use of time.ĭatacenter GPUs are the industry standard for deep learning workstations in production. These GPUs can cheaply upgrade or build workstations and are excellent for model development and low-level testing. Broadly, though, there are three main categories of GPUs you can choose from: consumer-grade GPUs, data center GPUs, and managed workstations, or servers, among these possibilities.Ĭonsumer-grade GPUs are smaller and cheaper but aren’t quite up to the task of handling large-scale deep learning projects, although they can serve as a starting point for workstations. As we will discuss later, NVIDIA dominates the market for GPUs, especially for their uses in deep learning and neural networks. There is a wide range of GPUs to choose from for your deep learning workstation. Most people in the AI community recommend GPUs for deep learning instead of CPUs for this very reason. This means you can get more done and done faster by utilizing GPUs instead of CPUs. GPUs can process multiple processes simultaneously, whereas CPUs tackle processes in order one at a time.

In brief, CPUs are probably the simplest and easiest solution for deep learning, but the results vary on the efficiency of CPUs when compared to GPUs. When you are diving into the world of deep learning, there are two choices for how your neural network models will process information: by utilizing the processing power of CPUs or by using GPUs. Let’s discuss whether GPUs are a good choice for a deep learning workstation, how many GPUs are needed for deep learning, and which GPUs are the best picks for your deep learning workstation. We’ll give our best recommendations that excel in these areas at the end of this guide. Two companies own the GPU market: NVIDIA and AMD. When it comes to GPU selection, you want to pay close attention to three areas: high performance, memory, and cooling. The GPU you choose is perhaps the most crucial decision for your deep learning workstation. Is one adequate, or should you add 2 or 4? If you build or upgrade your deep learning workstation, you will inevitably wonder how many GPUs you need for an AI workstation focused on deep learning or machine learning. Choosing the Right Number of GPUs for a Deep Learning Workstation

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed